EU AI Regulation (AI Act)

AI is increasingly becoming part of our everyday lives – and with that, the dangers are also growing. When machines participate in decisions about people, generate deceptively real content, or influence sensitive areas such as employment, health, and safety, the consequences can be serious. For this reason, the AI Regulation was introduced: to define clear boundaries, prevent misuse, and protect people from the risks of problematic AI applications. At the same time, it is intended to ensure that innovation within the EU remains possible, but on a reliable, human-centered foundation. In this way, the Regulation creates a framework in which progress does not come at the expense of fundamental rights, safety, and trust.

Artificial Intelligence Act

The current EU AI Regulation is officially called Regulation (EU) 2024/1689 (Artificial Intelligence Act / AI Regulation), was adopted on June 13, 2024, published in the Official Journal of the EU on July 12, 2024, and entered into force on August 1, 2024. It was created to establish a uniform internal market legal framework for human-centered and trustworthy AI, promote innovation, and at the same time protect health, safety, and fundamental rights. It covers AI systems and general-purpose AI models that are placed on the market, put into service, or whose output is used in the EU. This also applies if the provider is based in a third country.

What are the guidelines of the AI Regulation, and what must be complied with?

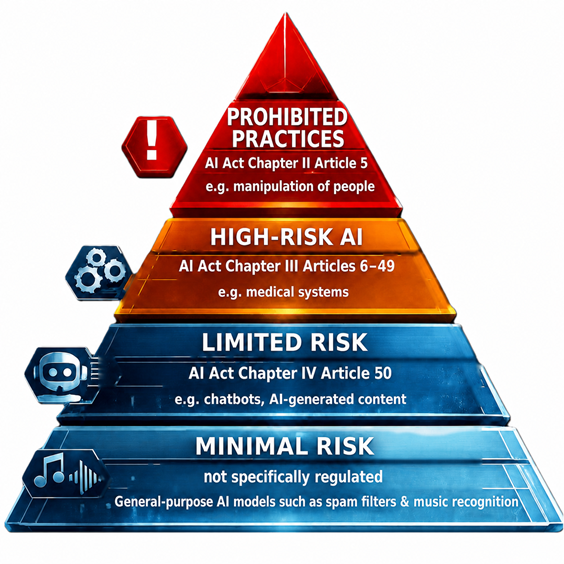

The prohibited practices under Article 5 include, among other things, harmful manipulation and deception, harmful exploitation of vulnerabilities, social scoring, certain AI-supported crime predictions, untargeted scraping of facial images from the internet or CCTV for facial recognition databases, emotion recognition in the workplace and in educational institutions, biometric categorization to infer sensitive characteristics, as well as certain real-time remote biometric applications for law enforcement purposes. These prohibitions have applied since February 2, 2025.

For high-risk AI, obligations apply including risk management, data quality/data governance, technical documentation, logging/logs, transparency/information for operators, human oversight, as well as accuracy, robustness, and cybersecurity. Providers of such systems must also, among other things, have a quality management system, carry out the conformity assessment before placing the system on the market, draw up an EU declaration of conformity, and affix the CE marking.

For certain AI systems with limited risk, transparency obligations apply: Anyone offering a system that interacts directly with people must generally ensure that the person concerned recognizes that they are interacting with AI; for systems that generate synthetic audio, image, video, or text content, the outputs must be identifiable in machine-readable form as artificially generated or manipulated.

In addition, since February 2, 2025, the obligation of AI literacy has applied: Providers and operators must make their best efforts to ensure that their staff and other persons acting on their behalf have a sufficient level of AI literacy, taking into account knowledge, experience, training, and the context of use.

For AI models with general-purpose use general-purpose AI models (GPAI), additional obligations have applied since August 2, 2025, including technical documentation, information sharing with downstream providers, a copyright policy, and a public summary of the training data; in cases of systemic risk, this is supplemented by model evaluations, adversarial testing, risk mitigation, reporting of serious incidents, and cybersecurity measures.

4 practical examples

Practical example 1: Emotion recognition in job applications. An AI evaluates applicants’ facial expressions or voice in order to draw conclusions about emotions or suitability. This is prohibited under AI Act Chapter II Article 5 AI Act.

Practical example 2: AI for applicant selection / CV screening. AI in employment, personnel management, and access to self-employment is considered one of the sensitive high-risk areas. Such a system may not simply be used “live,” but requires, among other things, risk management, documentation, logs, information for the operator, human oversight, and a conformity assessment before being placed on the market.

Practical example 3: Website chatbot. A company uses an AI chatbot in customer service. In that case, the user must generally be able to recognize that they are interacting with an AI system. This is a typical case of the transparency obligation and falls within the limited-risk category.

Practical example 4: AI image/text generator. If a system generates synthetic images, audio, video, or texts, these outputs must be marked under the Regulation in such a way that they can be recognized as artificially generated or manipulated.

How strictly must the AI Act be complied with?

The AI Regulation is not a voluntary code, but a binding EU regulation. It applies in stages: prohibitions and AI literacy since February 2, 2025, GPAI rules since August 2, 2025, most other obligations from August 2, 2026, and for high-risk AI in certain regulated products, an extended deadline applies until August 2, 2027.

It is also enforceable: market surveillance authorities can require corrective measures and – if a system is not compliant – require that it be brought into conformity, withdrawn from the market, or recalled. The deadline may be set by the authority and is no later than the shorter period of 15 working days or the relevant product law deadline.

For prohibited practices, the situation is particularly strict: the Commission guidelines explicitly clarify that the prohibitions themselves have direct effect and that affected persons can already enforce them before national courts and apply for interim measures.

That may sound bad at first, but don’t worry, this is really just a matter of common sense. It should be clear to everyone that if AI is used for oppression, discrimination, or manipulation of people, that is prohibited. It is like everything else in life, whether it is AI or not. Another point is the area of high-risk AI: if sensitive data is processed in medicine, data protection and confidentiality must absolutely be observed. AI systems such as chatbots or similar tools should be clearly labeled as AI, and texts, images, videos, music, etc. that were generated with AI should be labeled accordingly.

Where exactly does the current AI Regulation apply?

The legally binding core is the European Union market. The Regulation applies to providers who place AI systems or GPAI models on the market or put them into service in the European Union, to operators established in the European Union, and also to providers or operators from third countries if the output of their AI system is used in the European Union. The official title also carries the addition “Text with EEA relevance.”

The Regulation is therefore not tied only to physical products. A system can also be placed on the Union market via API, cloud, online access, direct download, or embedded in physical products.

What happens if you work with companies outside the EU or use their models?

If an EU company uses a model from a non-EU provider, the EU company may, depending on its role, be a deployer and itself fall under the AI Regulation. At the same time, the non-EU provider itself may also fall under the Regulation if it makes an AI system or GPAI model available on the Union market or if the output of its system is used in the EU.

For non-EU providers of GPAI models, the following applies: before placing them on the Union market, they must generally appoint an authorized representative in the EU. The same applies to non-EU providers of high-risk AI systems before these are made available on the Union market.

If personal data is processed in the course of cooperation, the GDPR also continues to apply. The EDPB emphasizes that a data protection legal basis may be required for the development and deployment of AI models and that a model trained on unlawfully processed personal data may impair the lawfulness of its use unless it has been effectively anonymized.

What consequences can arise if the AI Regulation is not complied with?

The Regulation provides for sanctions and other enforcement measures that must be effective, proportionate, and dissuasive; these may also include warnings and non-monetary measures. In addition, authorities may require corrective measures, market withdrawal, or recall in cases of non-compliance.

The key maximum fines under Article 99 are:

- up to EUR 35 million or 7% of worldwide annual turnover, whichever is higher, for violations of the prohibited practices under Article 5;

- up to EUR 15 million or 3% of worldwide annual turnover for violations of key obligations of providers, authorized representatives, importers, distributors, operators, or notified bodies, as well as transparency obligations;

- up to EUR 7.5 million or 1% of worldwide annual turnover for false, incomplete, or misleading information provided to authorities or notified bodies.

In addition, affected persons can lodge a complaint with the competent market surveillance authority. For certain high-risk AI decisions, there is also a right to a clear and meaningful explanation of the decision-making process.

Practical examples of violations that can lead to serious consequences

- Emotion recognition in recruiting: the Commission guidelines explicitly state that the use of emotion recognition AI during the recruiting process is prohibited.

- Emotion recognition in employment relationships: according to the guidelines, prohibited examples include systems that infer the emotional state of members of hybrid teams from voice and image, as well as use during probation periods or for monitoring employees.

- Biometric categorization based on assumed political orientation: the guidelines cite as a prohibited example a system that categorizes people on a social media platform according to their assumed political orientation based on biometric data from photos in order to send them targeted political messages.

- Untargeted scraping of facial images: the untargeted extraction of facial images from the internet or CCTV in order to create or expand facial recognition databases is prohibited.

What questions should I ask myself before using an AI tool in the company?

For every specific AI tool or AI workflow in your company, first check:

- Is it even an AI system or GPAI model within the meaning of the Regulation?

- Does the use case fall under prohibition, high risk, transparency, or minimal risk?

- Am I processing personal data, and does the GDPR also apply?

- Am I using a non-EU provider via API/cloud, so that third-country obligations become relevant?

- Do I only need transparency notices – or already documentation, logs, human oversight, and conformity assessment?

A small sample example of compliance with the AI Regulation

A German company uses a US LLM via API for a website chatbot in customer service. The company proceeds correctly if it a) determines its role as operator, b) checks whether this is merely a transparency case or a high-risk case, c) clearly informs users that they are interacting with AI, d) trains employees in AI literacy and documents these measures, e) also observes GDPR requirements where personal data is involved, and f) – if it is a GPAI model from a third-country provider – ensures that the relevant GPAI and authorized representative obligations are fulfilled. In the case of a normal website chatbot, this would typically be more of a transparency and governance issue; by contrast, an AI system for applicant preselection or credit decisions would regularly be subject to significantly stricter high-risk requirements.

If you still have questions, uncertainties, or too much skepticism about AI, then take a look at our training courses. Maybe there is something there for you.